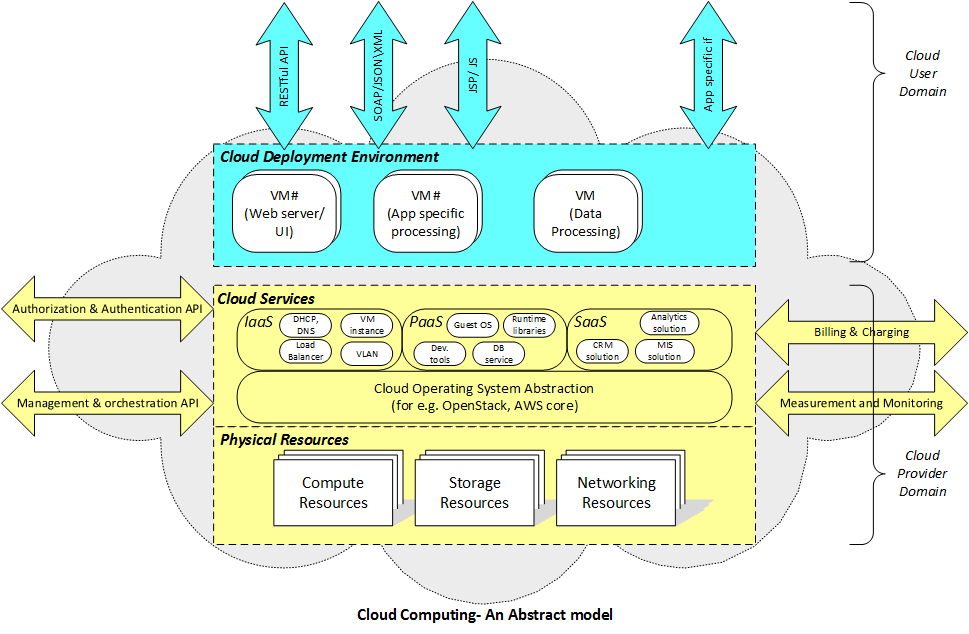

Cloud computing is the norm for hosting software applications catering to a variety of use cases in different verticals. Cloud Computing refers to Internet-based services that provide access to managed IT resources. These resources are managed by experts and are available on-demand on a pay-per-use model. This enables the application developers to focus on the use-case and come up with an MVP (minimum viable product) in a shorter period.

Let’s dig deep into some of the best practices you should follow for building cloud-ready applications that can make effective use of services offered by the cloud computing environment.

Some of the key areas for consideration are discussed in the following subsections:

The Right Cloud Infrastructure

To build a cloud-ready application, make sure the infrastructure used on the cloud for deployment is appropriate for your applications. It should neither be over-utilized nor under-utilized.

For eg., a Cloud Instance or Virtual Machine having 1 Core/2 GB RAM would be sufficient to deploy a simple web app with a limited number of requests. In contrast, a large enterprise developing a heavy application should consider using an instance with a higher configuration. Similarly, it applies to other services used in the cloud.

You can start with a similar instance configuration as configured on your on-premises server to ensure that services are not overutilized and performance is not degraded. As demand grows, the instance size can be modified on the cloud at run time as well.

Scalability

Almost every cloud vendor provides services to auto-scale your application up or down depending on the number of user requests. So, while building cloud ready applications, it is important to use these services to achieve benefits in terms of high availability, reduced operational effort and costs, on-demand provisioning and automation.

For instance, in AWS cloud (AWS Auto Scaling), you can create an AMI (Amazon Machine Image) from a single instance and then configure Auto Scaling feature to add new instances in case CPU Utilization or any other parameter reaches a threshold (say 80%). Similarly, we can configure it to remove one instance if CPU Utilization is below some threshold (say 10%).

You can configure the minimum and maximum number of instances in the auto-scaling feature. The minimum number of instances should be the number needed during normal load hours. On the other hand, the maximum should be the number of instances needed during peak load hours (say during festival time/weekends or any event).

Configuring the minimum number of instances in the auto-scaling group makes the solution resilient to failure as the cloud resource management typically supports relaunch & restart in the event of failure.

Application Architecture

Below are some points that must be take care of in the application engineering stage before moving to the cloud:

- If there are multiple applications deployed on different instances in the cloud, it is good to use private IP instead of public IP for communication among themselves in the cloud. This will allow faster access and hence increased performance.

- If auto-scaling is being considered, then it’s good to remove hard-coding of dynamic parameters like IP addresses, log file absolute paths, config file absolute paths, etc. in the application so that it can be packaged as a Machine Image.

- Session Management: In case a multi-node application and session is being used, it’s important to configure a sticky session in load balancer (provided by cloud vendor) to bind a user’s session to a specific instance. This ensures that all requests from the user during the session are sent to the same instance.

- Caching: Consider using Distributed Caching model in the cloud. Most Cloud Vendors provide such caching service.

- Log Management: Your existing log management tools will also work in the cloud. However, you can consider using Cloud Logging tools such as Loggly that provide centralized cloud-based log management service.

The application might require re-architecture to support high availability, scalable design, and integrate other cloud services.

Data Management

While moving your data to the cloud, it is important to explore various storage options provided by the cloud to match the best fit for your type of data in terms of durability, cost, latency, performance (response time), cache-ability etc.

Some example of the types of storage and the option provided in cloud are listed below (considering AWS as the vendor)

- Block Storage (OS, Database): AWS EBS

- File/Object Storage (Static Files): AWS S3

- Archive Data Storage: AWS Glacier

- NoSQL Database Storage: AWS Dynamo DB

Deployment

When it comes to the actual deployment of the application, the following points should be kept in mind.

- Multi-AZ: The application should be deployed across multiple availability zones for HA and fault tolerance (refer to reference architecture of a high available web hosting solution [4]).

- Application Backup: Backup your EBS volumes regularly using snapshots, create AMI (Amazon Machine Image) from your instance to save the configuration as a template for launching future instances.

- Elastic IP Address: Use Elastic IP address instead of public IP, so that the address of your EC2 does not change on server stop/start. Note that there is a limit of 5 Elastic IP addresses per account.

- Storage: For production deployment, use separate EBS volumes for storing data and operating system.

- Security Groups: Configure the Security Group for your EC2 using the least permissive rules. For e.g., if an instance needs to be accessed by only certain IP addresses, then its Security Group should allow only these addresses to access the instance, and not to others.

- Virtual Private Cloud(VPC): Consider creating your own VPC for deployment on AWS if you want your own data center for your organization. You can create your own public and private subnets, route tables, NATs etc.

- Auto Scaling: Configure auto-scaling to meet scaling requirements on demand. (refer to tutorial [5] to understand how auto-scaling helps in optimizing resource usage along with cost optimization).

Management and Monitoring

Cloud deployment vendors like AWS provide tools to collect and track metrics (like CPU Utilization, Network I/O, Network Packets In/Out, Disk Read/Write Bytes etc.) from instances in real time, collect and monitor your application log files, set alarms and automatically react to such alarms. You can use these tools to gain system-wide visibility into resource utilization, application performance, and application health.

For e.g., you can implement telemetry in your cloud applications to measure the performance of the application and configure this with Cloud service to track your log files and raise an alarm via SMS/Email notifications in case performance value decreases. You can even configure events so that when the alarm is raised, a new instance is launched and used in real-time.

Security

This is a vital step while migrating to the cloud. Cloud vendors generally provide a shared responsibility model for security. There are certain tasks that you are responsible for implementing security for your application in the cloud (the cloud vendors provide the necessary tools to configure the same).

For e.g.,

- Manage authorized access to the Cloud Services

- Use security groups or firewalls on the instances to configure inbound and outbound rules to allow only necessary IPs or Ports to interface with your instance.

- Support for HTTP-S for encryption of data in transit

- Encrypted Block Storage (standards such as AES-256) to enable encryption of data at rest.

Testing

After re-engineering your application, it is important to verify the functionality of the same. A separate QA or test environment should be created on the cloud which will be the replica of the production environment.

All functional and performance test suites should be executed and passed successfully on the QA environment (on the cloud) before being released to the live environment.

Backup and Disaster Recovery

It’s important to configure backup and DR approaches while moving to the cloud to ensure data is continually protected and prepare us for the worst possible events when they occur.

RTO refers to the amount of time required to return your business to normal operating levels, and RPO refers to the amount of time it will take to recover all your data.

Depending upon your RTO and RPO objectives, there are different solutions that Cloud Vendor services can provide. For e.g., asynchronous backup of your data to S3 in AWS, snapshots, multi-site solutions etc.

Build Cloud-Ready Applications with Ease

Building Cloud-ready applications without the right expertise can lead to various issues. A managed service provider like HSC can help you develop scalable cloud-ready applications. Our expertise in cloud practices like Microservices, DevOps, Containers, and PaaS help us architect, host, and platform new and existing applications with ease.

If you are looking for a cloud-native application development platform, please get in touch with us.

Product Engineering Services Customized software development services for diverse domains

Quality Assurance End-to-end quality assurance and testing services

Managed Services Achieve scalability, operational efficiency and business continuity

Technology Consulting & Architecture Leverage the extensive knowledge of our Domain Experts